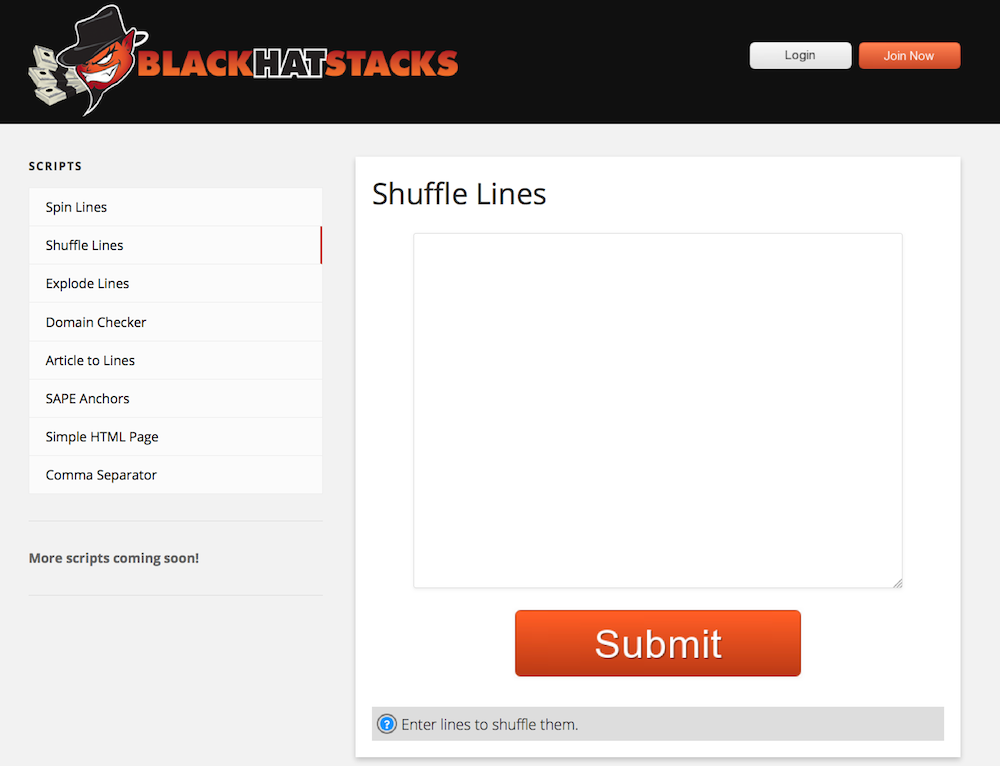

And now it's time to create verified URLs from them. At the end, considering you obtained some great proxies, you will be taking a look at countless target URLs. GSA Search Engine Ranker Tutorial - Latest Postīear in mind that this might take rather a while. At this rate, my proxies have actually never ever died, and have constantly scraped till the very end of the list of keywords. At 50 personal proxies, I let Scrapebox perform at 7 connections. Make certain you have some great and juice personal proxies (BuyProxies are the ones I recommend for this function too), and let it roll. As you can see from the above snapshot, it will search many times for the exact same footprint, and only changes the keyword.Īt this point we are ready to scrape our target URLs. This will just randomize each line from the source file, so as to not tip off search engine of any search patterns. Just choose the file as the source file, and call the target file. This will just include each of your keywords to each of the entries in the file: Now, choose all the 48540 keywords (you will have far more due to the fact that I just added footprints from one engine), and copy and paste them into a new file called - GSA Search Engine Ranker Help. Now go back to Scrapebox and click the "M" button above the radio button. Go to your GSA Search Engine Ranker -> -> -> ->. Select, and leave it at that in the meantime, because it's time to get the engines footprints from GSA SER. Now open Scrapebox and import the file into the Harvester section. For instance, you can utilize all of the short article categories from ezinearticles - GSA Search Engine Ranker training.Ĭopy all of them and paste them into a file called. First you will begin by selecting some target keywords. Now, considering that you have actually no verified URLs in your GSA SER yet, you will need some head start, after which the lists will grow tremendously. GScraper will likewise work, but we utilize Scrapebox. įirst of all, you will require Scrapebox. GSA Search Engine Ranker Review & Tutorial - A New Guide. GSA Search Engine Ranker: Learn To Rank Sites

GSA Search Engine Ranker Tutorial - Updated 2020 Sit back and leave SER to be the little mean green spamming machine that she is.Table of Contents What are the best GSA search engine ranker tutorial settings

Modify project> Import Target URLs>Choose the scraped url file Open SER and right click on the project/s that you want to use them in. Export them as a text file and save them. Use the remove duplicates feature in scrape box. When you wake up in the morning you should have about 1 million URLs (depending on your settings). Set Scrapebox to harvest over night and, go to bed, have a beer, bang your missus, or whatever else flicks your switch. Hit the merge button and import the list of footprints that you just made with excel (as you have used the place holder "%KW%" you should now see your list of footprints with your keywords added onto the end in quotes, like so Open Scrapebox and set the delay, proxies, connections, search engines etc. Copy the whole of column C to notepad and save it as something memorable like "GSA social scraping footprints". Top Rated "Most Discussed" "Browse by Tag" "%KW%" You should now have a list in column C that looks something like this: In cell C1 type:- =A1&B1 and drag it down the list. Drag cell B1 down to the bottom of the list. In cell B1 type:- space"%KW%" (don't type space, just leave a space at the beginning) Copy the list to excel starting from cell A1 Find the footprints you want, add them to the list, and copy them from the SER popup into to notepad (one per line) Click: Options >Advanced>Tools>Search online for urls>Add predefined footprint I'm by no means an expert, but I use scrape box to scrape for target to import into SER, and this is my process (You probably know most of this already, but I'll post it anyway to help others who may not).Īssuming that you have already gone through the 'submitted vs verified' lists and worked out which engines perform best.